|

I am a Ph.D canditate at CILab in Yonsei university, Seoul, South Korea. My research mainly focuses on various 2D/3D computer vision tasks including generative models, and their applications to intelligent vehicle and robotic systems. I'm always open to collaborations or suggestions. Please feel free to contact me if you have any questions or suggestions. :) |

|

|

|

|

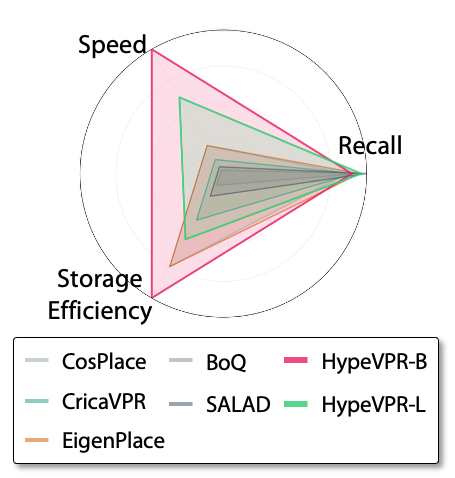

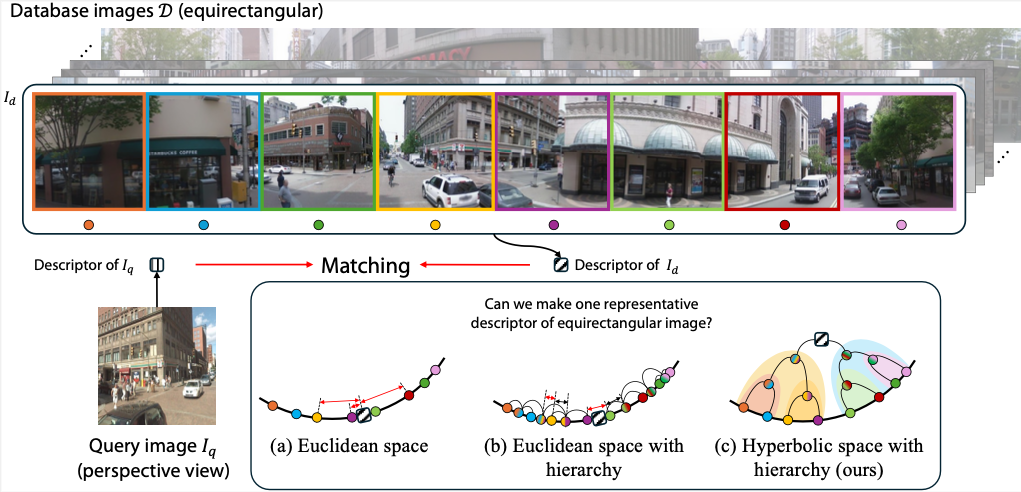

Suhan Woo, Seongwon Lee, Jinwoo Jang, Euntai Kim IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2026), Denver, USA (Acceptance Rate: 25.4%) Paper / Project Page / bib We propose a novel P2E VPR method that leverages the properties of hyperbolic space to address the matching problem between perspective views and equirectangular images. |

|

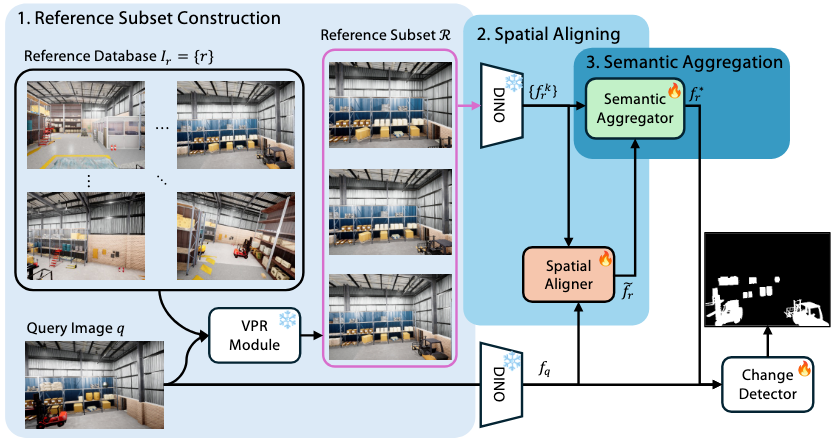

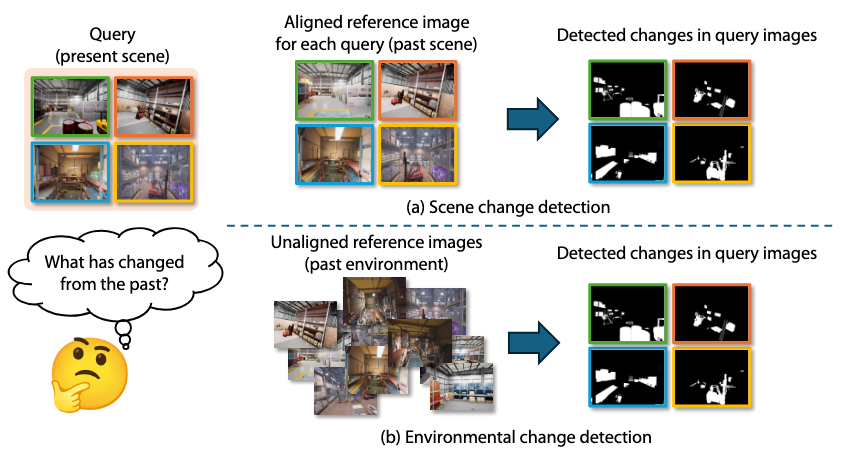

Kyusik Cho, Suhan Woo, Hongje Seong, Euntai Kim Under Review Paper / bib We propose a novel Environmental Change Detection (ECD) framework that handles misaligned and uncurated reference images by aggregating multiple reference candidates and rich semantic cues, achieving robust and state-of-the-art performance on standard benchmarks. |

|

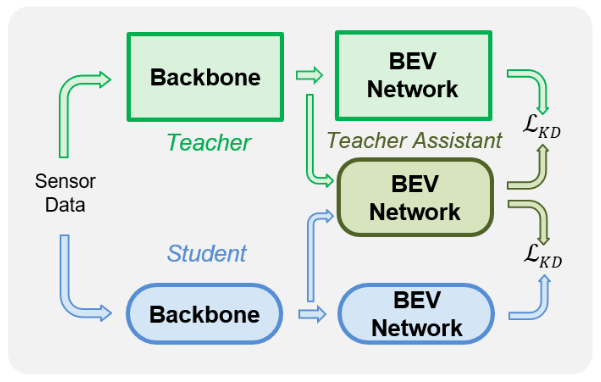

Beomjun Kim, Suhan Woo, Sejong Heo, Euntai Kim IEEE International Conference on Robotics & Automation (ICRA 2026), Vienna, Austria (Acceptance Rate: 38.0%) Paper / bib We propose BridgeTA, a cost-effective knowledge distillation framework that bridges the representation gap between LiDAR-Camera fusion and camera-only models through a lightweight teacher-assistant network, achieving superior efficiency and performance in BEV map segmentation. |

|

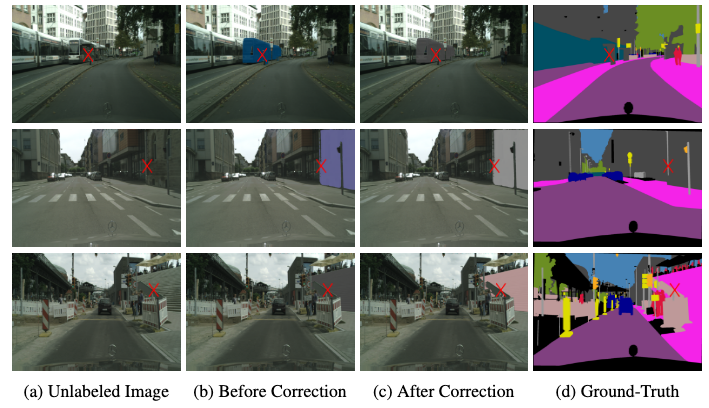

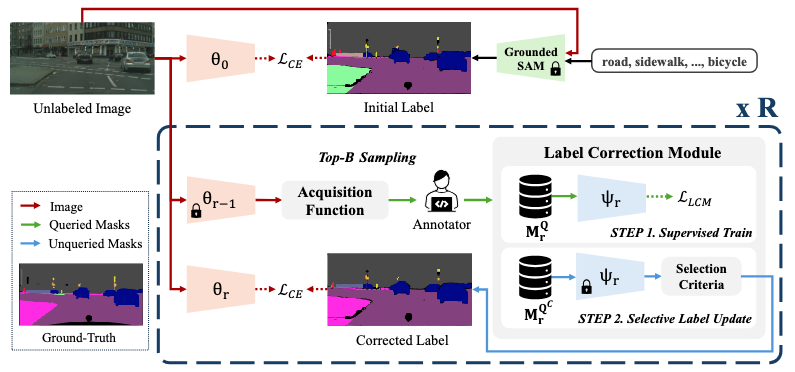

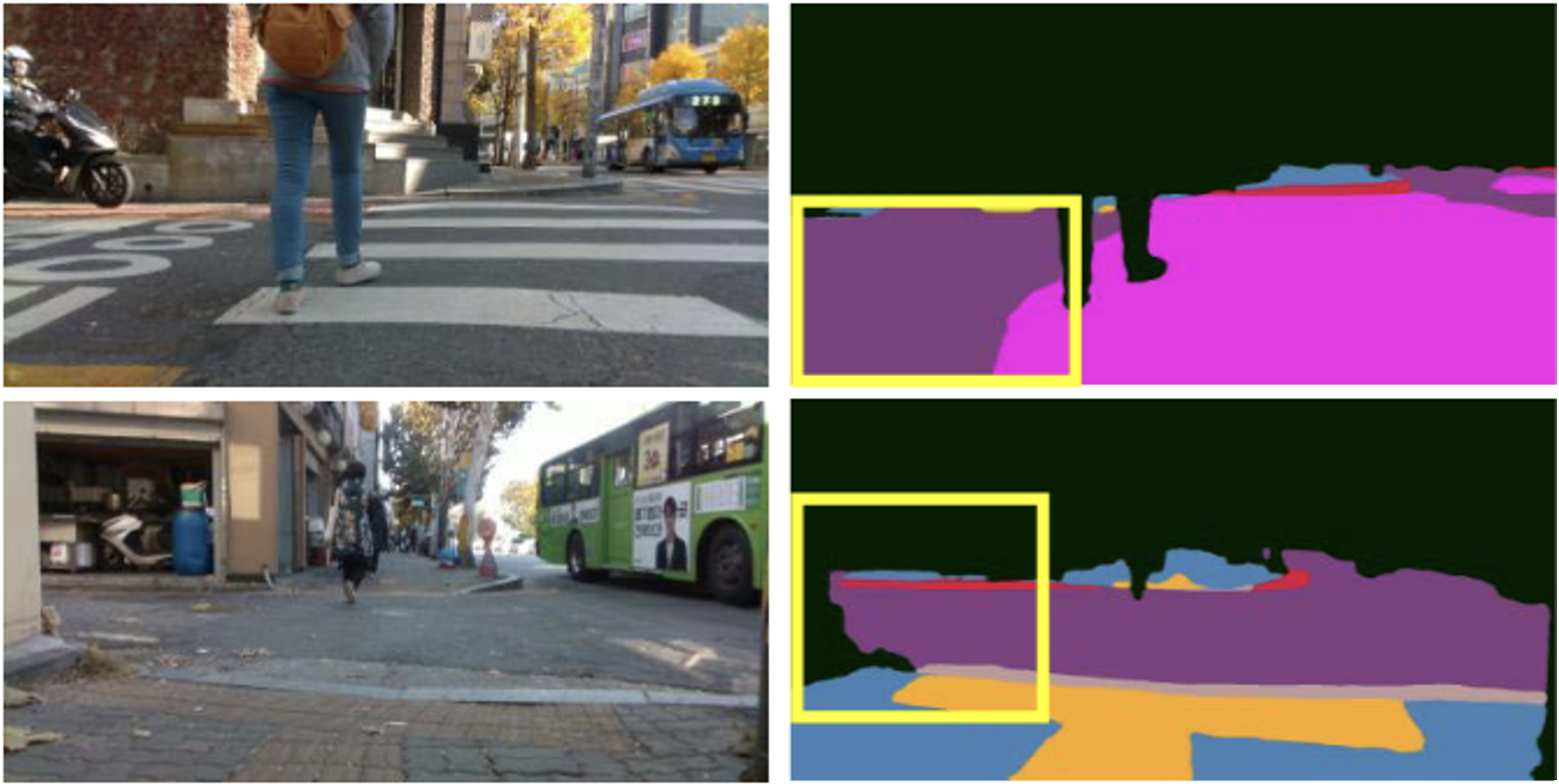

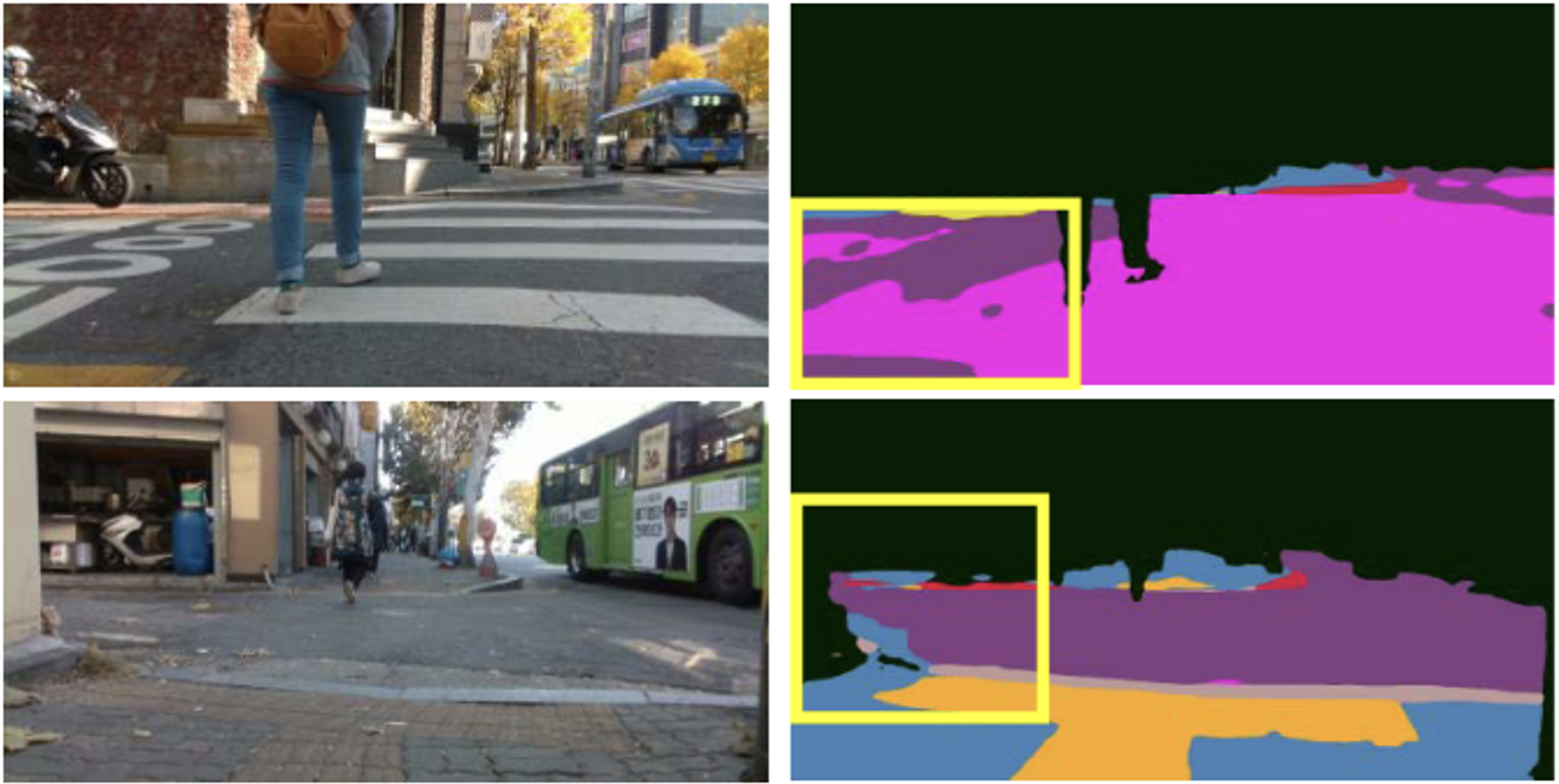

Youjin Jeon*, Kyusik Cho*, Suhan Woo, Euntai Kim (* Equal contribution) AAAI Conference on Artificial Intelligence (AAAI-26), Singapore (Acceptance Rate: 17.6%) Paper / bib We propose A2LC, a novel active and automated label correction framework for semantic segmentation that integrates automated correction guided by annotator feedback with adaptive sample acquisition, achieving significantly higher efficiency and performance than prior ALC methods. |

|

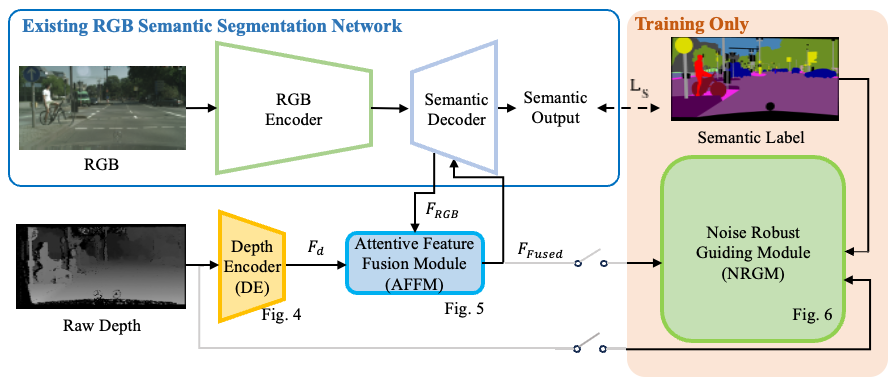

Suhan Woo, Junhyuk Hyun, Suhyeon Lee, Euntai Kim International Journal of Control, Automation, and Systems, vol. 23 no. 12 (2025) pp. 3649-3661 (IF: 2.9 in JCR2024) This paper proposes a real-time RGB-D semantic segmentation method that effectively encodes depth information and fuses it with RGB features to enhance segmentation performance while maintaining computational efficiency. |

|

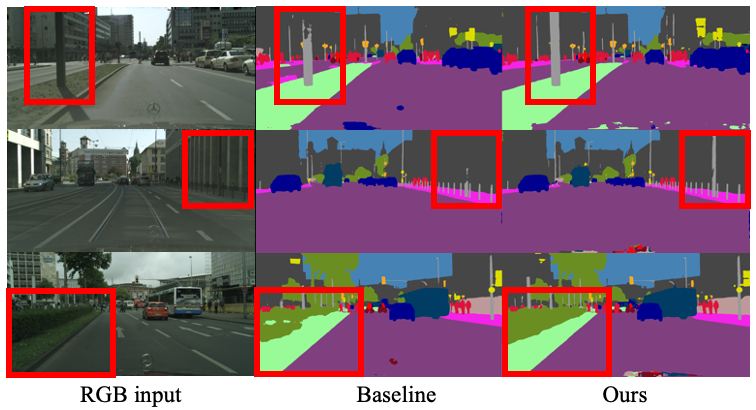

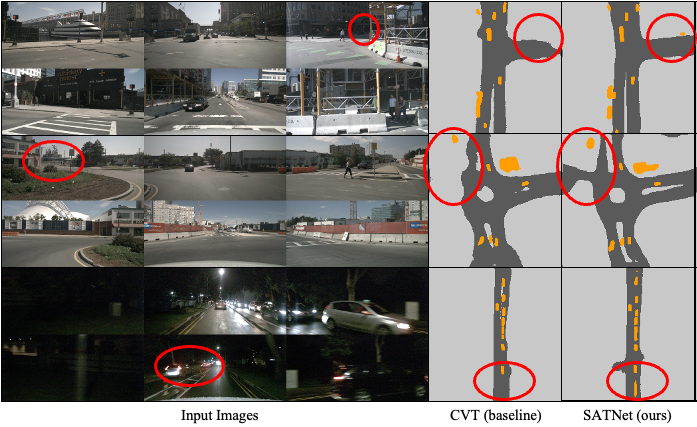

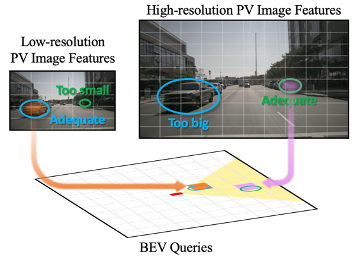

Suhan Woo, Minseong Park, Youngjo Lee, Seongwon Lee, Euntai Kim IEEE Transactions on Intelligent Vehicles, vol. 10, no. 9, pp. 4467–4478, Sep. 2025. (IF: 14.3 in JCR2024) Paper / bib We propose a novel BEV segmentation network that adaptively utilizes features of various scales based on the location in the BEV space. |

|

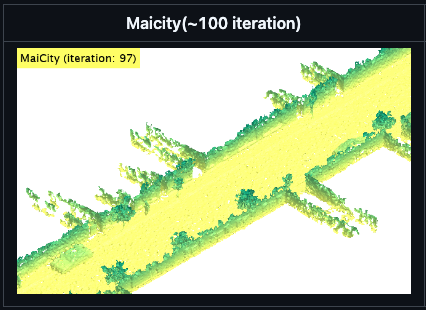

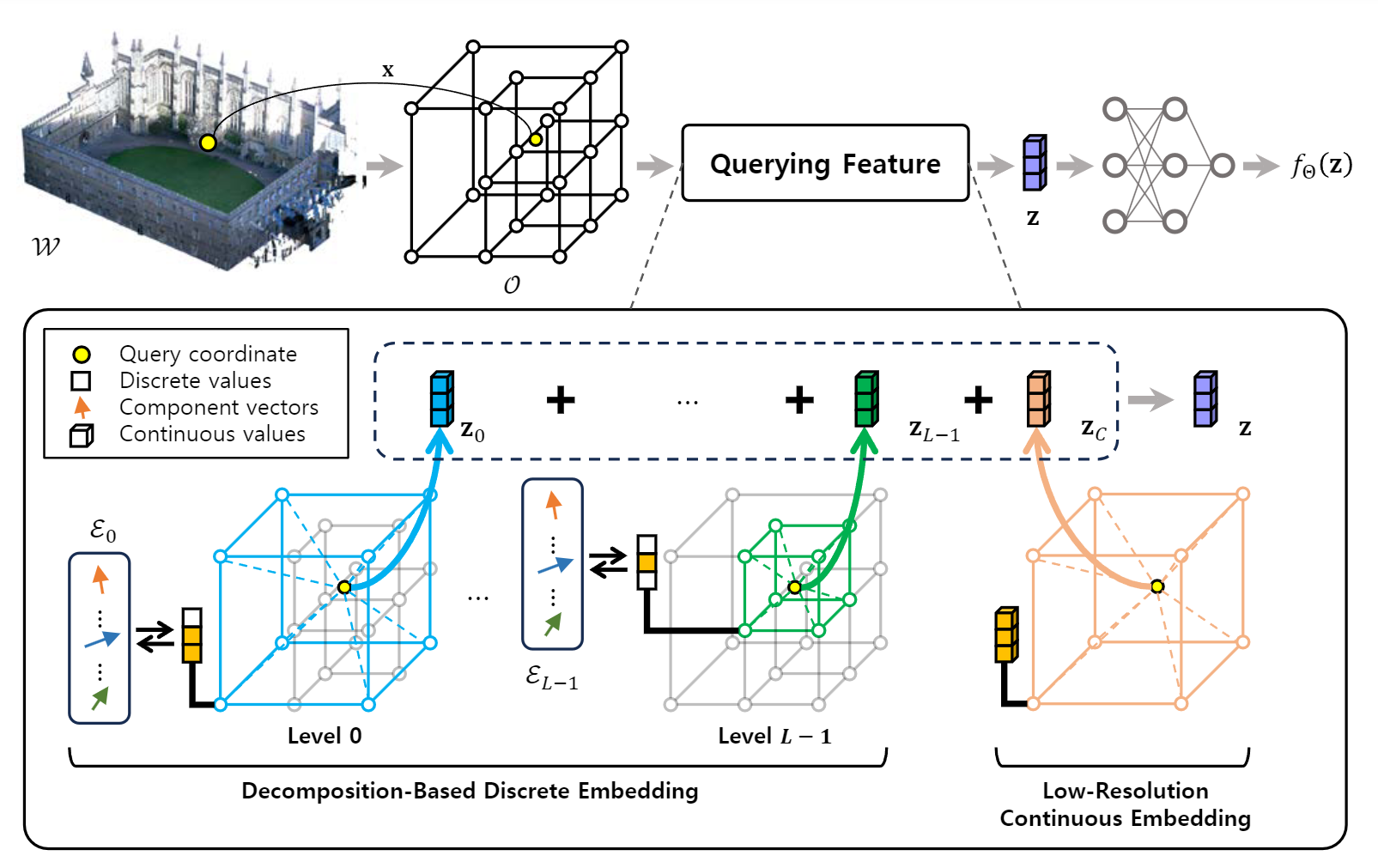

Minseong Park, Suhan Woo, Euntai Kim European Conference on Computer Vision (ECCV 2024) (Acceptance Rate: 27.9%) Paper / Code / bib We propose a storage-efficient large-scale 3D mapping method that employs a discrete representation based on a decomposition strategy. |

|

Junhyuk Hyun, Suhan Woo, Euntai Kim IEEE Access, 2022 (IF: 3.4 in JCR2023) Paper / bib This paper proposes a real-time RGB-based street floor segmentation method that identifies traversable and non-traversable curbs to support mobile robot navigation in urban environments. |

|

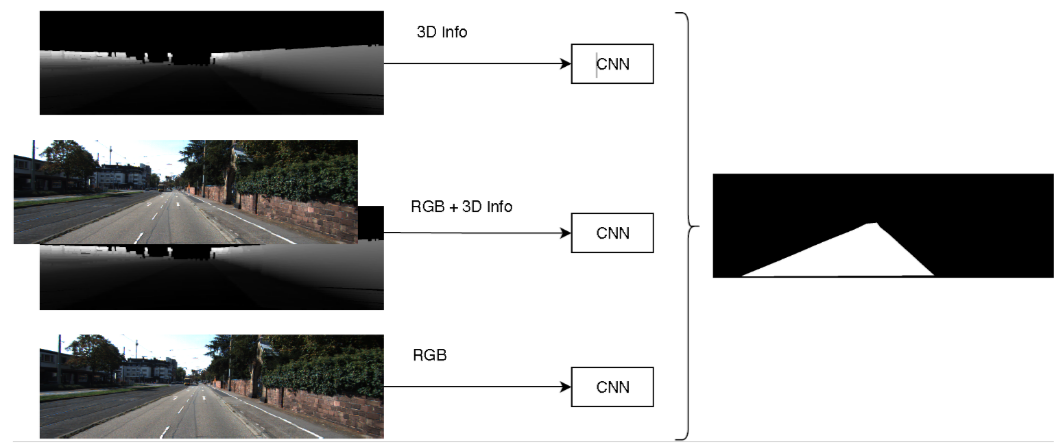

A. Hernández, Suhan Woo, H. Corrales, I. Parra, Euntai Kim, D. F. Llorca IEEE Intelligent Vehicles Symposium (IV 2020), Las Vegas, United States Paper / bib We propose 3D-DEEP, a deep-learning architecture designed for road scene understanding using elevation patterns derived from disparity-filtered and LiDAR-projected images. |